Do NOT follow this link or you will be banned from the site!

SpongeBob and the Semantic Web

Submitted by thedosmann on Wed, 04/15/2015 - 17:52

Concept mapping

When talking about the Semantic Web there are a few premises one must acknowledge. One premise is that any data exchange on the internet is originally initiated by a human. There is no information retrieval without a request for that information and that request can be directly tied to a human request for the information. This is true with

bots, data harvesting, and any other mechanism or format used to gather and store information. This means the initial request format is a human concept. Either a programmer coded the request using a coding format or a user typed a request directing into an input designed to take that input and follow a set order of coded instructions.

Concept mapping is not a new idea. It has been widely used in the academic and computer fields for some time now.

(http://cmap.ihmc.us/publications/researchpapers/theorycmaps/theoryunderl...) The use, thus far, of concept mapping has been used mostly to gauge results. If a teacher is teaching a new concept to a class she might use concept mapping to see if the concept was adequately conveyed by using a concept map. This is done by polling the students on their conveyed concept of the subject and then mapping the results to the core concept. The resulting map could be used to adjust the teaching materials used. The same is true in programming. A programmer might use concept mapping to fine tune code so that the desired program response is achieved. The process is supposed to compare the concept being presented and the conveyed concept. Much like comparing translations to see which interpretation interprets the information more closely to the core concept.

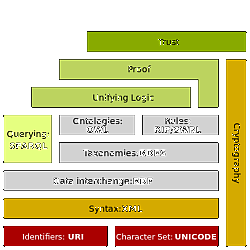

Getting programming code to interpret concepts can often be quite difficult. Even a simple concept like a circle is not the simplest of programming feats. As in human concepts, the best method found to do this was to draw upon concepts that the code already had; mathematics. By using mathematics, it was possible to map the concept of a circle to mathematics and achieve success in teaching a computer to draw a circle. This is basically how the Semantic Web and concept mapping will interact in order to have conceptual mapping of data.

The semantics of the web are just as difficult to map as it is to map the dialects and languages of the world. There are existing concepts that will allow for a strong base, but a tremendous amount of work is left to be done.

Who lives in a pineapple under the sea?

On the most basic of levels, everything can be placed in the higher categories of person, place, or thing, with an exhausting list of sub-categories. Imagine that you had to explain the concept of SpongeBob to a Tibetan mountain town that never received any media broadcasts. You would have to assume that they had a developed concept of animation. Let's look at it from a programming standpoint. Is SpongeBob a person? Well, that would depend on your

concept of a person. We will accept that SpongeBob is a person under the animated category. Hold on for a second; he is a sponge so wouldn't he be an animated thing? But he can talk, think, show emotions, and do pretty much everything else a person can do, except that he is an animated sponge. So under the category of person->animated-> maybe an animal category? Because of data integrity, an animal category would not be a good thing; under person. But wait, what if instead of SpongeBob it was an animated non-fictional character? Then under the person category we would have an animated category and then a non-fictional and fictional category.

With conceptual mapping, we are making assumptions that the sub-categories of the concept exist. The only way to verify this is to do some elimination mapping. This is where we try and map a concept to one that exists and then verify the appropriate sub-categories are actually there. Let's look at my data structure mapping to find the name SpongeBob:

Person->Fictional->Animated->Geography->Ocean->phylum Porifera.

And under Thing I have:

Thing->Fictional->Animated->Animal->Geography->Ocean->phylum Porifera.

Don't critique this, it is only an example.

Now if the program or person searching for this name has a different concept their search string might be “the name of the animated animal that lives underwater”. Here is where the semantics can be mapped. Because I have an entity tag of Animated that semantically maps to both:

Person->Fictional->Animated and Thing->Fictional->Animated

I can map name to SpongeBob under the entity of animated. This link is a semantic link so it doesn’t break data integrity. We already have a way to do conceptual data mapping with entity relationships.

It is semantics at its very base use but this is the way to map information concepts. If the sub-categories are not conceptually mapped or do not exist then either the mapping is not sufficient or the data structure needs to be taught that concept. With SKOS (http://www.w3.org/2009/08/skos-reference/skos.html) we have the tools and standards and it is already being implemented across websites.

Can a program learn new concepts?

Just as humans learn new concepts by linking to existing ones; data programs can be programmed to map to existing concepts so it can learn new concepts. The fact that, in your data mapping, the concept is not linkable makes no difference. If the connecting query's concept is correct in its own structural concept then we have to be able to learn from our existing concepts the new concept. The trick is to get the data talking as close as possible in a semantic way by dynamically creating those conceptual links and verifying the integrity of your response. It is possible to not be able to accept the new concept while maintaining core data integrity. There is nothing wrong with requesting further information in the form of category suggestions or a return response that indicates further information or re-phrasing is required.

As programmers, we have to be able to link the data conceptually in order to bridge semantic gaps. If the conveyed concept we have is not the concept that was intended to be delivered we need ways to link to existing program and data concepts by sufficiently creating categorized content that can be easily mapped. There should be core concepts that maintain integrity but also concepts that link across conceptual lines of interpretation to discover new concepts.

by Jim Atkins "thedosmann"

Share it now!