Do NOT follow this link or you will be banned from the site!

Techradar

Timestamp: Jul 21, 2025

- Microsoft is collecting more data on performance issues with Windows 11

- This is happening via feedback from testers using preview builds

- Hoovering up a whole lot more logs relating to performance hitches will hopefully help Microsoft stamp out sluggishness on the desktop

Microsoft has promised to improve Windows 11's overall performance levels, ensuring the operating system runs more nippily all round, and it'll use data from the PCs of testers to do this.

Windows Latest spotted that in a new preview build in the Dev channel, Microsoft announced the scheme, which urges testers to report incidents of system sluggishness.

Microsoft informs us: "As part of our commitment to improving Windows performance, logs are now collected when your PC has experienced any slow or sluggish performance. Windows Insiders are encouraged to provide feedback when experiencing PC issues related to slow or sluggish performance, allowing Feedback Hub to automatically collect these logs, which will help us root cause issues faster."

Essentially, Microsoft is attempting to expand the quantity and scope of logs relating to performance issues that it's receiving, in order to better deal with speed-related niggles in Windows 11.

The logs pertaining to performance issues are stored in a temporary folder on the system drive, and Microsoft says they're only sent across to the company when the user submits feedback (via the Feedback Hub, where there's a new section for reports of 'system sluggishness').

Analysis: exploring new avenues of improvement

There have been a good few complaints about performance hiccups – or indeed more serious failings – with Windows 11, so it's good to see Microsoft launch a fresh initiative to help combat these issues (with any luck – the results, of course, remain to be seen).

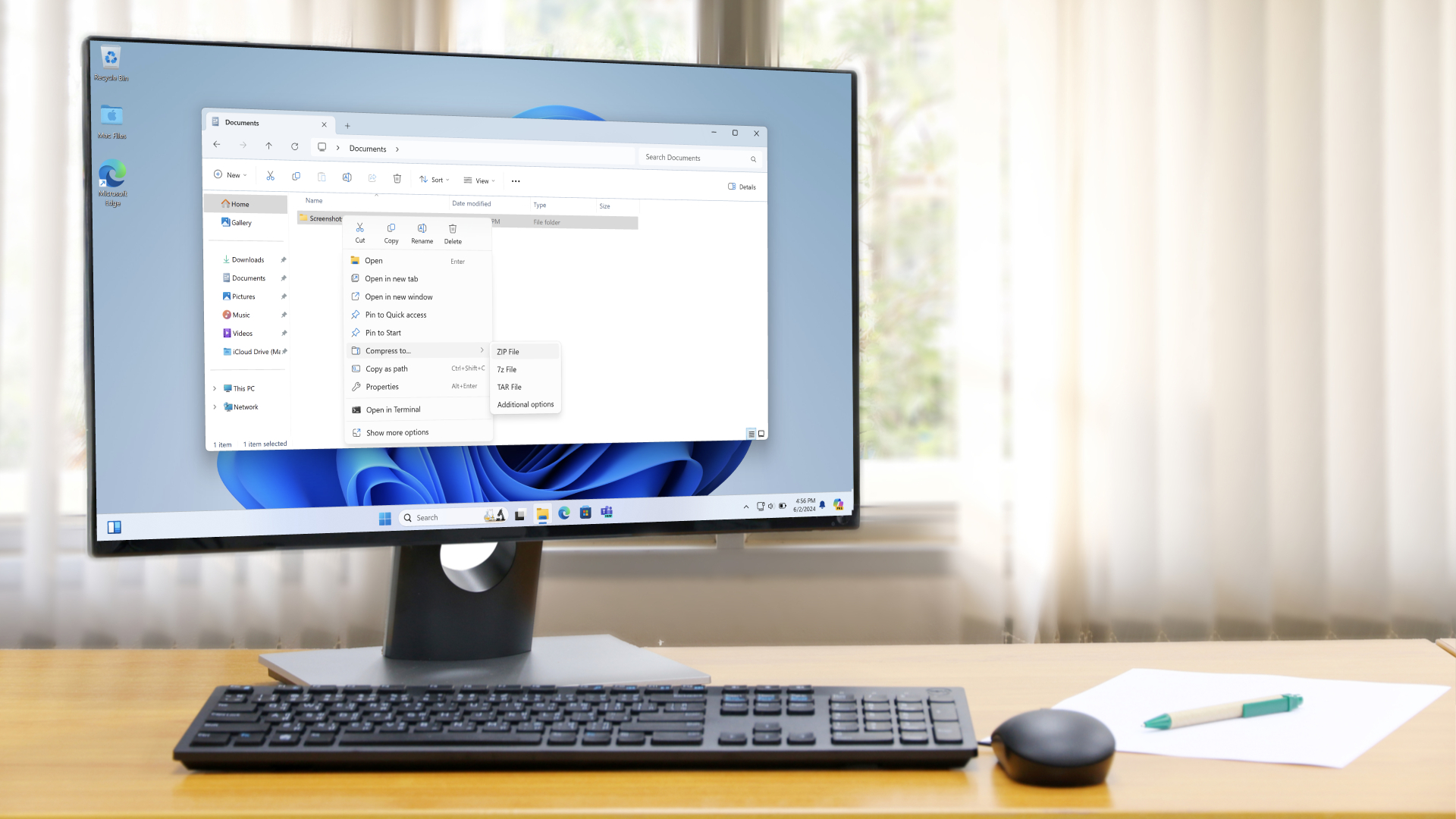

Sluggish search functionality and wonkiness with File Explorer performing sub-optimally have been a couple of obvious problems that Windows 11 has caused for some users. Granted, not everyone has suffered from these kinds of woes, although I've certainly experienced File Explorer sluggishness on my Windows 11 laptop (but not on my desktop PC).

These are frustrating issues to be faced with, given that they're key pieces of the interface which really shouldn't be going awry, and hopefully testers will get behind this effort, as it would be good for all concerned if Microsoft can get a better handle on improving the performance of Windows 11 for those who find it lacking (especially on older PCs – like my notebook, which is a venerable Surface model – where any shortcomings are more likely to be noticeable).

Finally, it's worth making clear that data on incidents of sluggish performance is only being collected through preview builds of Windows 11, so those logs are just kept on the PCs of testers, not normal users of the release version of the OS.

And, as noted, logs from testers are only sent to Microsoft voluntarily, so even if the data itself is collected automatically, it doesn't leave your drive until and unless you submit a feedback post.

You might also like...- Windows 11's handheld mode spotted in testing, and I'm seriously excited for Microsoft's big bet on small-screen gaming

- No, Windows 11 PCs aren't 'up to 2.3x faster' than Windows 10 devices, as Microsoft suggests – here's why that's an outlandish claim

- macOS Tahoe 26: here's everything you need to know about all the new features